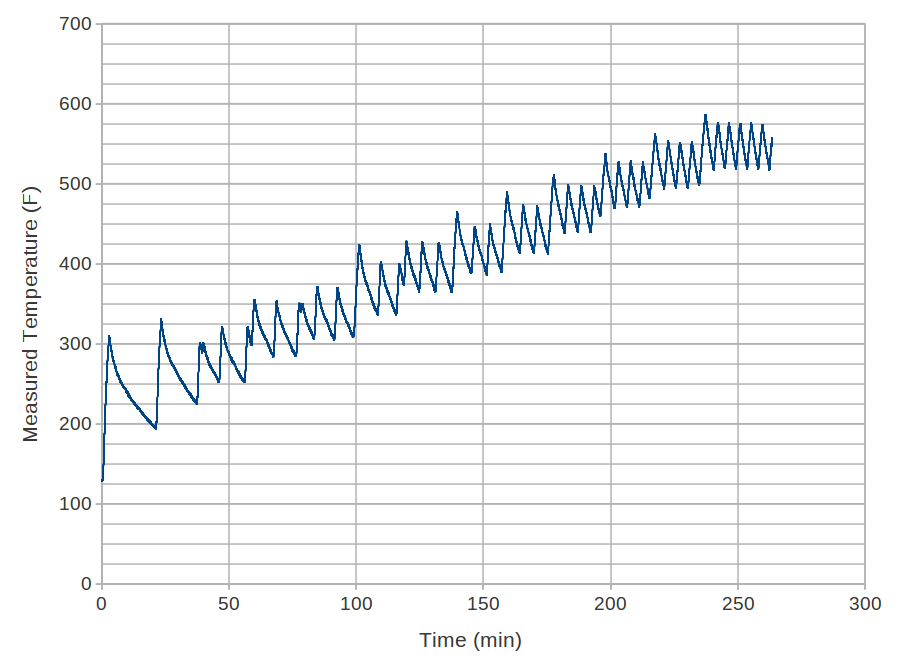

Now that I had established the mis-calibration of my oven, I wanted to characterize it so that I can dial it in to achieve a desired temperature. I know that it’s easier to raise the temperature than to wait for the temperature to dissipate, so my plan was to start at a low temperature (200 ) and periodically (approx. every 20 minutes) increase it by 25 degrees at a time. Below are the results:

A couple of neat points until I get to the heart of the matter. Notice how the frequency of the temperature control increases at higher temperatures. Remember, I had established that the cooling off of the oven occurs similar to exponential decay. The result is that it cools off relatively fast (deg/min) at higher temperatures, and slower at lower temperatures, because the temperature difference with its surroundings is higher at the higher temperatures. So, because it’s cooling off faster at higher temperatures, the heating element has to turn on more often, causing the temperature to fluctuate much faster. In fact, at the lower temperatures, I didn’t even get a full cycle over which to average during the 20 minute duration.

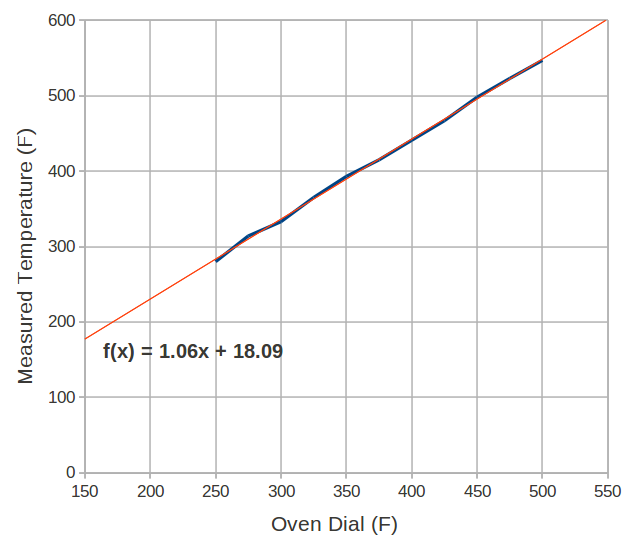

So I pick up the temperature correlation at 275 . My initial inclination was to just take the average value between each period’s peak and trough, but because the curve is not regular (it cools off slower than it heats up), this would bias the temperature higher than what the time averaged temperature actually is. So I took the time to actually time average each curve segment between peaks (or multiple peaks if available). The following is those results as well as a linear curve fit:

In a perfect world, where the oven works like it’s supposed to, the best fit line would have a slope of 1.0 and an intercept of zero, i.e. , i.e. what you dial up on the oven is what the temperature actually is. Unfortunately, both values differ from their ideal. So, not only is there an offset between dialed and measured temperatures, but moving the dial by a degree results in 1.06 degree change in the oven temperature. As a result, the oven is off more at higher temperatures. For example, when the dial reads 300, the oven is at 336

; 36 degrees off. But when the dial is at 500, the oven is at 548

; 48 degrees off.

I don’t think I’ll be wanting to run that calculation every time something needs cooking. I’ll probably pick a “best” offset value of, say, 40 degrees and hope that the recipes can tolerate a difference of 10 degrees or less.

I knew that thermocouple that I bought with no solid plans for using would come in handy some day…